Impactful AI Research

Publications

ICML 2026 | Conf-Gen: Conformal Uncertainty Quantification for Generative Models

ICML 2026 | Beyond Procedure: Substantive Fairness in Conformal Prediction

ICLR 2026 | Textual Bayes: Quantifying Uncertainty in LLM-Based Systems

EACL 2026 | Classifying and Addressing the Diversity of Errors in Retrieval-Augmented Generation Systems

NeurIPS 2025 Spotlight | CausalPFN: Amortized Causal Effect Estimation via In-Context Learning

International Conference on Machine Learning

ICML 2026 | Agentic Monte Carlo: Reinforcement Learning for Black-Box LLM Agents

Abstract

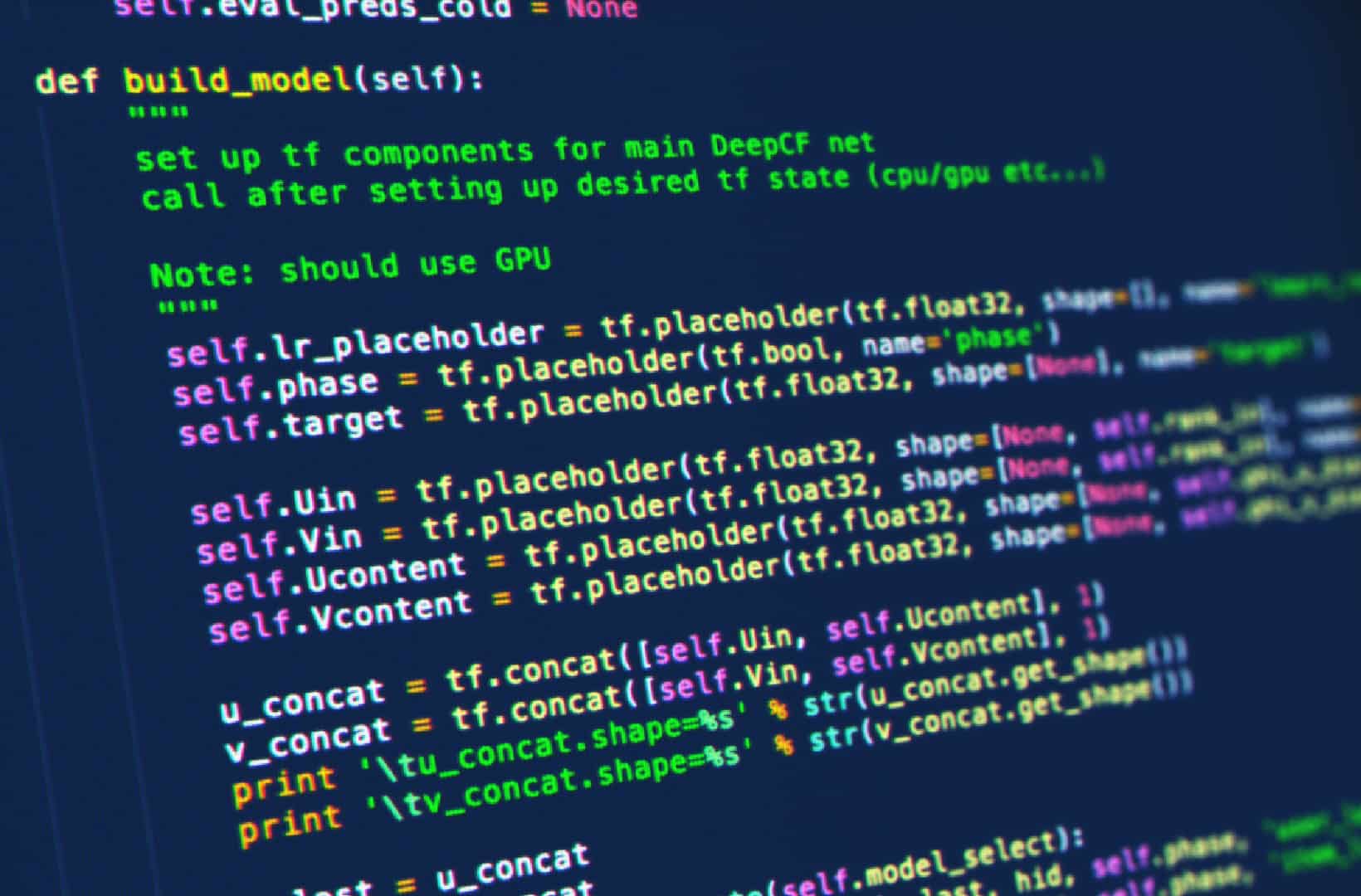

LLM agents operate in two distinct regimes: open-weight agents amenable to reinforcement learning (RL) and black-box agents whose behaviour must be controlled purely at test time. Although black-box agents are often backed by state-of-the-art proprietary LLMs, API-only access precludes parameter-level optimization, rendering most RL methods inapplicable. To address this limitation, we turn to a known equivalence between RL and Bayesian inference. We propose Agentic Monte Carlo (AMC) to directly sample from the optimal policy of a black-box agent rather than training it through RL. The optimal policy is a posterior over trajectories whose prior we define as the fixed black-box LLM agent. We employ Sequential Monte Carlo to sample from this posterior by learning a value function to steer the agent while leaving the underlying black-box model unchanged. We validate AMC on three diverse environments from the AgentGym benchmark, demonstrating significant improvements over prompting baselines and even outperforming Group Relative Policy Optimization (GRPO) as we scale the test-time compute of our method. AMC demonstrates the feasibility of performing principled RL-style optimization of black-box LLM agents.

International Conference on Machine Learning

ICML 2026 | Conf-Gen: Conformal Uncertainty Quantification for Generative Models

International Conference on Machine Learning

ICML 2026 | Beyond Procedure: Substantive Fairness in Conformal Prediction

Abstract

Conformal prediction (CP) offers distribution-free uncertainty quantification for machine learning models, yet its interplay with fairness in downstream decision-making remains underexplored. Moving beyond CP as a standalone operation (procedural fairness), we analyze the holistic decision-making pipeline to evaluate substantive fairness-the equity of downstream outcomes. Theoretically, we derive an upper bound that decomposes prediction-set size disparity into interpretable components, clarifying how label-clustered CP helps control method-driven contributions to unfairness. To facilitate scalable empirical analysis, we introduce an LLM-in-the-loop evaluator that approximates human assessment of substantive fairness across diverse modalities. Our experiments reveal that label-clustered CP variants consistently deliver superior substantive fairness. Finally, we empirically show that equalized set sizes, rather than coverage, strongly correlate with improved substantive fairness, enabling practitioners to design more fair CP systems.

International Conference on Learning Representations

ICLR 2026 | Textual Bayes: Quantifying Uncertainty in LLM-Based Systems

Abstract

Although large language models (LLMs) are becoming increasingly capable of solving challenging real-world tasks, accurately quantifying their uncertainty remains a critical open problem–one that limits their applicability in high-stakes domains. This challenge is further compounded by the closed-source, black-box nature of many state-of-the-art LLMs. Moreover, LLM-based systems can be highly sensitive to the prompts that bind them together, which often require significant manual tuning (i.e., prompt engineering). In this work, we address these challenges by viewing LLM-based systems through a Bayesian lens. We interpret prompts as textual parameters in a statistical model, allowing us to use a small training dataset to perform Bayesian inference over these prompts. This novel perspective enables principled uncertainty quantification over both the model’s textual parameters and its downstream predictions, while also incorporating prior beliefs about these parameters expressed in free-form text. To perform Bayesian inference–a difficult problem even for well-studied data modalities–we introduce Metropolis-Hastings through LLM Proposals (MHLP), a novel Markov chain Monte Carlo (MCMC) algorithm that combines prompt optimization techniques with standard MCMC methods. MHLP is a turnkey modification to existing LLM pipelines, including those that rely exclusively on closed-source models. Empirically, we demonstrate that our method yields improvements in both predictive accuracy and uncertainty quantification (UQ) on a range of LLM benchmarks and UQ tasks. More broadly, our work demonstrates a viable path for incorporating methods from the rich Bayesian literature into the era of LLMs, paving the way for more reliable and calibrated LLM-based systems.

Conference of the European Chapter of the Association for Computational Linguistics

EACL 2026 | Classifying and Addressing the Diversity of Errors in Retrieval-Augmented Generation Systems

Abstract

Retrieval-augmented generation (RAG) is a prevalent approach for building LLM-based question-answering systems that can take advantage of external knowledge databases. Due to the complexity of real-world RAG systems, there are many potential causes for erroneous outputs. Understanding the range of errors that can occur in practice is crucial for robust deployment. We present a new taxonomy of the error types that can occur in realistic RAG systems, examples of each, and practical advice for addressing them. Additionally, we curate a dataset of erroneous RAG responses annotated by error types. We then propose an auto-evaluation method aligned with our taxonomy that can be used in practice to track and address errors during development.

Neural Information Processing Systems

NeurIPS 2025 Spotlight | CausalPFN: Amortized Causal Effect Estimation via In-Context Learning

Abstract

Causal effect estimation from observational data is fundamental across various applications. However, selecting an appropriate estimator from dozens of specialized methods demands substantial manual effort and domain expertise. We present CausalPFN, a single transformer that amortizes this workflow: trained once on a large library of simulated data-generating processes that satisfy ignorability, it infers causal effects for new observational datasets out-of-the-box. CausalPFN combines ideas from Bayesian causal inference with the large-scale training protocol of prior-fitted networks (PFNs), learning to map raw observations directly to causal-effects without any task-specific adjustment. Our approach achieves superior average performance on heterogeneous and average treatment effect estimation benchmarks (IHDP, Lalonde, ACIC). Moreover, it shows competitive performance for real-world policy making on uplift modeling tasks. CausalPFN provides calibrated uncertainty estimates to support reliable decision-making based on Bayesian principles. This ready-to-use model does not require any further training or fine-tuning and takes a step toward automated causal inference.

Neural Information Processing Systems

NeurIPS 2025 | TabDPT: Scaling Tabular Foundation Models on Real Data

Abstract

Tabular data is one of the most ubiquitous sources of information worldwide, spanning a wide variety of domains. This inherent heterogeneity has slowed the development of Tabular Foundation Models (TFMs) capable of fast generalization to unseen datasets. In-Context Learning (ICL) has recently emerged as a promising solution for TFMs, enabling dynamic adaptation to new tasks without additional tuning. While many studies have attempted to re-purpose large language models for tabular ICL, they have had limited success, so recent works have focused on developing tabular-specific foundation models. In this work, we propose an approach to combine ICL-based retrieval with self supervised learning to train tabular foundation models. We also investigate the utility of real vs. synthetic data for model pre-training, and show that real data can contain useful signal not easily captured in synthetic training. Specifically, we show that incorporating real data during the pre-training phase can lead to significantly faster training and better downstream generalization to unseen data. Our resulting model, TabDPT, achieves top performance on both regression (CTR23) and classification (CC18) benchmarks. Importantly, we also demonstrate that with our pre-training procedure, scaling both model and data size leads to consistent performance improvements that follow power laws. This echoes scaling laws in LLMs and other foundation models, and suggests that Internet-scale TFMs can be achievable. We open-source our full pipeline.

Neural Information Processing Systems

NeurIPS 2025 | Document Summarization with Conformal Importance Guarantees

Abstract

Automatic summarization systems have advanced rapidly with large language models (LLMs), yet they still lack reliable guarantees on inclusion of critical content in high-stakes domains like healthcare, law, and finance. In this work, we introduce Conformal Importance Summarization, the first framework for importance-preserving summary generation which uses conformal prediction to provide rigorous, distribution-free coverage guarantees. By calibrating thresholds on sentence-level importance scores, we enable extractive document summarization with user-specified coverage and recall rates over critical content. Our method is model-agnostic, requires only a small calibration set, and seamlessly integrates with existing black-box LLMs. Experiments on established summarization benchmarks demonstrate that Conformal Importance Summarization achieves the theoretically assured information coverage rate. Our work suggests that Conformal Importance Summarization can be combined with existing techniques to achieve reliable, controllable automatic summarization, paving the way for safer deployment of AI summarization tools in critical applications.

Transactions on Machine Learning Research

TMLR 2025 | On Convolutions, Intrinsic Dimension, and Diffusion Models

Abstract

The manifold hypothesis asserts that data of interest in high-dimensional ambient spaces, such as image data, lies on unknown low-dimensional submanifolds. Diffusion models (DMs) — which operate by convolving data with progressively larger amounts of Gaussian noise and then learning to revert this process — have risen to prominence as the most performant generative models, and are known to be able to learn distributions with low-dimensional support. For a given datum in one of these submanifolds, we should thus intuitively expect DMs to have implicitly learned its corresponding local intrinsic dimension (LID), i.e. the dimension of the submanifold it belongs to. Kamkari et al. (2024b) recently showed that this is indeed the case by linking this LID to the rate of change of the log marginal densities of the DM with respect to the amount of added noise, resulting in an LID estimator known as FLIPD. LID estimators such as FLIPD have a plethora of uses, among others they quantify the complexity of a given datum, and can be used to detect outliers, adversarial examples and AI-generated text. FLIPD achieves state-of-the-art performance at LID estimation, yet its theoretical underpinnings are incomplete since Kamkari et al. (2024b) only proved its correctness under the highly unrealistic assumption of affine submanifolds. In this work we bridge this gap by formally proving the correctness of FLIPD under realistic assumptions. Additionally, we show that an analogous result holds when Gaussian convolutions are replaced with uniform ones, and discuss the relevance of this result.

SIGIR Conference on Research and Development in Information Retrieval

SIGIR 2025 | Response Quality Assessment for Retrieval-Augmented Generation via Conditional Conformal Factuality

Abstract

Existing research on Retrieval-Augmented Generation (RAG) primarily focuses on improving overall question-answering accuracy, often overlooking the quality of sub-claims within generated responses. Recent methods that attempt to improve RAG trustworthiness, such as auto-evaluation metrics, often lack probabilistic guarantees or requires ground truth answers, failing to provide reliable and scalable assessment. To address these limitations, we propose Conformal-RAG, a novel framework inspired by recent applications of conformal prediction in large language models (LLMs). Conformal-RAG leverages conformal prediction and internal information from the RAG mechanism to offer statistical guarantees on response quality. It ensures conditional coverage (potentially spanning multiple sub-domains) without requiring manual calibration of conformal sets, making it suitable for complex RAG applications. Compared to existing RAG auto-evaluation methods, Conformal-RAG offers statistical guarantees on the quality of refined sub-claims, ensuring response reliability without needing ground truth answers. Additionally, our experiments demonstrate that by leveraging RAG internal information, Conformal-RAG retains more high-quality sub-claims from the response while maintaining the same reliability guarantee as naïve adaptations of conformal prediction in LLMs. Specifically, Conformal-RAG retains 60% more high-quality sub-claims in biography generation tasks and 20% more in medication question-answering tasks.

International Conference on Learning Representations

ICLR 2025 Spotlight | A Geometric Framework for Understanding Memorization in Generative Models

Abstract

As deep generative models have progressed, recent work has shown them to be capable of memorizing and reproducing training datapoints when deployed. These findings call into question the usability of generative models, especially in light of the legal and privacy risks brought about by memorization. To better understand this phenomenon, we propose the manifold memorization hypothesis (MMH), a geometric framework which leverages the manifold hypothesis into a clear language in which to reason about memorization. We propose to analyze memorization in terms of the relationship between the dimensionalities of (i) the ground truth data manifold and (ii) the manifold learned by the model. This framework provides a formal standard for “how memorized” a datapoint is and systematically categorizes memorized data into two types: memorization driven by overfitting and memorization driven by the underlying data distribution. By analyzing prior work in the context of the MMH, we explain and unify assorted observations in the literature. We empirically validate the MMH using synthetic data and image datasets up to the scale of Stable Diffusion, developing new tools for detecting and preventing generation of memorized samples in the process.

International Conference on Learning Representations

ICLR 2025 Spotlight | Conformal Prediction Sets Can Cause Disparate Impact

Abstract

Although conformal prediction is a promising method for quantifying the uncertainty of machine learning models, the prediction sets it outputs are not inherently actionable. Many applications require a single output to act on, not several. To overcome this, prediction sets can be provided to a human who then makes an informed decision. In any such system it is crucial to ensure the fairness of outcomes across protected groups, and researchers have proposed that Equalized Coverage be used as the standard for fairness. By conducting experiments with human participants, we demonstrate that providing prediction sets can increase the unfairness of their decisions. Disquietingly, we find that providing sets that satisfy Equalized Coverage actually increases unfairness compared to marginal coverage. Instead of equalizing coverage, we propose to equalize set sizes across groups which empirically leads to more fair outcomes.

Association for Computational Linguistics

NAACL 2025 Oral | MSc-SQL: Multi-Sample Critiquing Small Language Models For Text-To-SQL Translation

Abstract

Text-to-SQL generation enables non-experts to interact with databases via natural language. Recent advances rely on large closed-source models like GPT-4 that present challenges in accessibility, privacy, and latency. To address these issues, we focus on developing small, efficient, and open-source text-to-SQL models. We demonstrate the benefits of sampling multiple candidate SQL generations and propose our method, MSc-SQL, to critique them using associated metadata. Our sample critiquing model evaluates multiple outputs simultaneously, achieving state-of-the-art performance compared to other open-source models while remaining competitive with larger models at a much lower cost.

Neural Information Processing Systems

NeurIPS 2024 Spotlight | A Geometric View of Data Complexity: Efficient Local Intrinsic Dimension Estimation with Diffusion Models

Abstract

High-dimensional data commonly lies on low-dimensional submanifolds, and estimating the local intrinsic dimension (LID) of a datum — i.e. the dimension of the submanifold it belongs to — is a longstanding problem. LID can be understood as the number of local factors of variation: the more factors of variation a datum has, the more complex it tends to be. Estimating this quantity has proven useful in contexts ranging from generalization in neural networks to detection of out-of-distribution data, adversarial examples, and AI-generated text. The recent successes of deep generative models present an opportunity to leverage them for LID estimation, but current methods based on generative models produce inaccurate estimates, require more than a single pre-trained model, are computationally intensive, or do not exploit the best available deep generative models, i.e. diffusion models (DMs). In this work, we show that the Fokker-Planck equation associated with a DM can provide a LID estimator which addresses all the aforementioned deficiencies. Our estimator, called FLIPD, is compatible with all popular DMs, and outperforms existing baselines on LID estimation benchmarks. We also apply FLIPD on natural images where the true LID is unknown. Compared to competing estimators, FLIPD exhibits a higher correlation with non-LID measures of complexity, better matches a qualitative assessment of complexity, and is the only estimator to remain tractable with high-resolution images at the scale of Stable Diffusion.

Neural Information Processing Systems

NeurIPS 2024 | Retrieval & Fine-Tuning for In-Context Tabular Models

Abstract

Tabular data is a pervasive modality spanning a wide range of domains, and the inherent diversity poses a considerable challenge for deep learning. Recent advancements using transformer-based in-context learning have shown promise on smaller and less complex datasets, but have struggled to scale to larger and more complex ones. To address this limitation, we propose a combination of retrieval and fine-tuning: we can adapt the transformer to a local subset of the data by collecting nearest neighbours, and then perform task-specific fine-tuning with this retrieved set of neighbours in context. Using TabPFN as the base model — currently the best tabular in-context learner — and applying our retrieval and fine-tuning scheme on top results in what we call a locally-calibrated PFN, or LoCalPFN. We conduct extensive evaluation on 95 datasets curated by TabZilla from OpenML, upon which we establish a new state-of-the-art with LoCalPFN — even with respect to tuned tree-based models. Notably, we show a significant boost in performance compared to the base in-context model, demonstrating the efficacy of our approach and advancing the frontier of deep learning in tabular data.

Transactions on Machine Learning Research

TMLR 2024 | Deep Generative Models through the Lens of the Manifold Hypothesis: A Survey and New Connections

Abstract

In recent years there has been increased interest in understanding the interplay between deep generative models (DGMs) and the manifold hypothesis. Research in this area focuses on understanding the reasons why commonly-used DGMs succeed or fail at learning distributions supported on unknown low-dimensional manifolds, as well as developing new models explicitly designed to account for manifold-supported data. This manifold lens provides both clarity as to why some DGMs (e.g. diffusion models and some generative adversarial networks) empirically surpass others (e.g. likelihood-based models such as variational autoencoders, normalizing flows, or energy-based models) at sample generation, and guidance for devising more performant DGMs. We carry out the first survey of DGMs viewed through this lens, making two novel contributions along the way. First, we formally establish that numerical instability of likelihoods in high ambient dimensions is unavoidable when modelling data with low intrinsic dimension. We then show that DGMs on learned representations of autoencoders can be interpreted as approximately minimizing Wasserstein distance: this result, which applies to latent diffusion models, helps justify their outstanding empirical results. The manifold lens provides a rich perspective from which to understand DGMs, which we aim to make more accessible and widespread.

Transactions on Machine Learning Research

TMLR 2024| Augment then Smooth: Reconciling Differential Privacy with Certified Robustness

Abstract

Machine learning models are susceptible to a variety of attacks that can erode trust, including attacks against the privacy of training data, and adversarial examples that jeopardize model accuracy. Differential privacy and certified robustness are effective frameworks for combating these two threats respectively, as they each provide future-proof guarantees. However, we show that standard differentially private model training is insufficient for providing strong certified robustness guarantees. Indeed, combining differential privacy and certified robustness in a single system is non-trivial, leading previous works to introduce complex training schemes that lack flexibility. In this work, we present DP-CERT, a simple and effective method that achieves both privacy and robustness guarantees simultaneously by integrating randomized smoothing into standard differentially private model training. Compared to the leading prior work, DP-CERT gives up to a 2.5× increase in certified accuracy for the same differential privacy guarantee on CIFAR10. Through in-depth per-sample metric analysis, we find that larger certifiable radii correlate with smaller local Lipschitz constants, and show that DP-CERT effectively reduces Lipschitz constants compared to other differentially private training methods.

Computer Vision and Pattern Recognition Conference

DataCV Challenge 2nd Place | Classifier Guided Cluster Density Reduction for Dataset Selection

Abstract

We address the challenge of selecting an optimal dataset from a source pool with annotations to enhance performance on a target dataset derived from a different source. This is important in scenarios where it is hard to afford on-the-fly dataset annotation and is also the theme of the second Visual Data Understanding (VDU) Challenge. Our solution the Classifier Guided Cluster Density Reduction (CCDR) framework operates in two stages. Initially we employ a filtering technique to identify images that align with the target dataset’s distribution. Subsequently we implement a graph-based cluster density reduction method steered by a classifier that approximates the distance between the target distribution and source distribution. This classifier is trained to distinguish between images that resemble the target dataset and those that do not facilitating the pruning process shown in Figure 1. Our approach maintains a balance between selecting pertinent images that match the target distribution and eliminating redundant ones that do not contribute to the enhancement of the detection model. We demonstrate the superiority of our method over various baselines in object detection tasks particularly in optimizing the training set distribution on the region100 dataset.

International Conference on Machine Learning

ICML 2024 | Conformal Prediction Sets Improve Human Decision Making

Abstract

In response to everyday queries, humans explicitly signal uncertainty and offer alternative answers when they are unsure. Machine learning models that output calibrated prediction sets through conformal prediction mimic this human behaviour; larger sets signal greater uncertainty while providing alternatives. In this work, we study the usefulness of conformal prediction sets as an aid for human decision making by conducting a pre-registered randomized controlled trial with conformal prediction sets provided to human subjects. With statistical significance, we find that when humans are given conformal prediction sets their accuracy on tasks improves compared to fixed-size prediction sets with the same coverage guarantee. The results show that quantifying model uncertainty with conformal prediction is helpful for human-in-the-loop decision making and human-AI teams.

International Conference on Machine Learning

ICML 2024 | A Geometric Explanation of the Likelihood OOD Detection Paradox

Abstract

Likelihood-based deep generative models (DGMs) commonly exhibit a puzzling behaviour: when trained on a relatively complex dataset, they assign higher likelihood values to out-of-distribution (OOD) data from simpler sources. Adding to the mystery, OOD samples are never generated by these DGMs despite having higher likelihoods. This two-pronged paradox has yet to be conclusively explained, making likelihood-based OOD detection unreliable. Our primary observation is that high-likelihood regions will not be generated if they contain minimal probability mass. We demonstrate how this seeming contradiction of large densities yet low probability mass can occur around data confined to low-dimensional manifolds. We also show that this scenario can be identified through local intrinsic dimension (LID) estimation, and propose a method for OOD detection which pairs the likelihoods and LID estimates obtained from a pre-trained DGM. Our method can be applied to normalizing flows and score-based diffusion models, and obtains results which match or surpass state-of-the-art OOD detection benchmarks using the same DGM backbones.

Computer Vision and Pattern Recognition Conference

CVPR 2024 Highlight | Data-Efficient Multimodal Fusion on a Single GPU

Abstract

The goal of multimodal alignment is to learn a single latent space that is shared between multimodal inputs. The most powerful models in this space have been trained using massive datasets of paired inputs and large-scale computational resources, making them prohibitively expensive to train in many practical scenarios. We surmise that existing unimodal encoders pre-trained on large amounts of unimodal data should provide an effective bootstrap to create multimodal models from unimodal ones at much lower costs. We therefore propose FuseMix, a multimodal augmentation scheme that operates on the latent spaces of arbitrary pre-trained unimodal encoders. Using FuseMix for multimodal alignment, we achieve competitive performance — and in certain cases outperform state-of-the art methods — in both image-text and audio-text retrieval, with orders of magnitude less compute and data: for example, we outperform CLIP on the Flickr30K text-to-image retrieval task with ∼600× fewer GPU days and ∼80× fewer image-text pairs. Additionally, we show how our method can be applied to convert pre-trained text-to-image generative models into audio-to-image ones.

International Conference on Learning Representations

ICLR 2024 | Self-supervised Representation Learning from Random Data Projectors

Abstract

Self-supervised representation learning (SSRL) has advanced considerably by exploiting the transformation invariance assumption under artificially designed data augmentations. While augmentation-based SSRL algorithms push the boundaries of performance in computer vision and natural language processing, they are often not directly applicable to other data modalities, and can conflict with application-specific data augmentation constraints. This paper presents an SSRL approach that can be applied to any data modality and network architecture because it does not rely on augmentations or masking. Specifically, we show that high-quality data representations can be learned by reconstructing random data projections. We evaluate the proposed approach on a wide range of representation learning tasks that span diverse modalities and real-world applications. We show that it outperforms multiple state-of-the-art SSRL baselines. Due to its wide applicability and strong empirical results, we argue that learning from randomness is a fruitful research direction worthy of attention and further study.

Transactions on Machine Learning Research

TMLR 2024 | Neural Implicit Manifold Learning for Topology-Aware Density Estimation

Abstract

Natural data is often constrained to an low-dimensional manifold. This work focuses on the task of building theoretically principled generative models for such data. Current generative models learn the manifold by mapping an low-dimensional latent variable through a neural network. These procedures, which we call pushforward models, incur a straightforward limitation: manifolds cannot in general be represented with a single parameterization, meaning that attempts to do so will incur either computational instability or the inability to learn probability densities within the manifold. To remedy this problem, we propose to model the manifold as a neural implicit manifold: the set of zeros of a neural network. We then learn the probability density within the manifold with a constrained energy-based model, which employs a constrained variant of Langevin dynamics to train and sample from the learned manifold. In experiments on synthetic and natural data, we show that our model can learn manifold-supported distributions with complex topologies more accurately than pushforward models.

Neural Information Processing Systems Conference

NeurIPS 2023 | Exposing flaws of generative model evaluation metrics and their unfair treatment of diffusion models

Abstract

We systematically study a wide variety of image-based generative models spanning semantically-diverse datasets to understand and improve the feature extractors and metrics used to evaluate them. Using best practices in psychophysics, we measure human perception of image realism for generated samples by conducting the largest experiment evaluating generative models to date, and find that no existing metric strongly correlates with human evaluations. Comparing to 16 modern metrics for evaluating the overall performance, fidelity, diversity, and memorization of generative models, we find that the state-of-the-art perceptual realism of diffusion models as judged by humans is not reflected in commonly reported metrics such as FID. This discrepancy is not explained by diversity in generated samples, though one cause is over-reliance on Inception-V3. We address these flaws through a study of alternative self-supervised feature extractors, find that the semantic information encoded by individual networks strongly depends on their training procedure, and show that DINOv2-ViT-L/14 allows for much richer evaluation of generative models. Next, we investigate data memorization, and find that generative models do memorize training examples on simple, smaller datasets like CIFAR10, but not necessarily on more complex datasets like ImageNet. However, our experiments show that current metrics do not properly detect memorization; none in the literature is able to separate memorization from other phenomena such as underfitting or mode shrinkage. To facilitate further development of generative models and their evaluation we release all generated image datasets, human evaluation data, and a modular library to compute 16 common metrics for 8 different encoders.

Neural Information Processing Systems Conference

NeurIPS 2023 | Adversarially robust learning with uncertain perturbation sets

Abstract

In many real-world settings exact perturbation sets to be used by an adversary are not plausibly available to a learner. While prior literature has studied both scenarios with completely known and completely unknown perturbation sets, we propose an in-between setting of learning with respect to a class of perturbation sets. We show that in this setting we can improve on previous results with completely unknown perturbation sets, while still addressing the concerns of not having perfect knowledge of these sets in real life. In particular, we give the first positive results for the learnability of infinite Littlestone classes when having access to a perfect-attack oracle. We also consider a setting of learning with abstention, where predictions are considered robustness violations, only when the wrong prediction is made within the perturbation set. We show there are classes for which perturbation-set unaware learning without query access is possible, but abstention is required.

ACM Conference on Recommender Systems

RecSys Challenge 2023 1st Place | Robust User Engagement Modeling with Transformers and Self-Supervision

Abstract

Online advertising has seen exponential growth transforming into a vast and dynamic market that encompasses many diverse platforms such web search, e-commerce, social media and mobile apps. The rapid growth of products and services presents a formidable challenge for advertising platforms, and accurately modeling user intent is increasingly critical for targeted ad placement. The 2023 ACM RecSys Challenge, organized by ShareChat, provides a standardized benchmark for developing and evaluating user intent models using a large dataset of impression from the ShareChat and Moj apps. In this paper we present our approach to this challenge. We use Transformers to automatically capture interactions between different types of input features, and propose a self-supervised optimization framework based on the contrastive objective. Empirically, we demonstrate that self-supervised learning effectively reduces overfitting improving model generalization and leading to significant gains in performance. Our team, Layer 6 AI, achieved 1st place on the final leaderboard out of over 100 teams.

Machine Learning for Healthcare

MLHC 2023 | DuETT: Dual Event Time Transformer for Electronic Health Records

Abstract

Electronic health records (EHRs) recorded in hospital settings typically contain a wide range of numeric time series data that is characterized by high sparsity and irregular observations. Effective modelling for such data must exploit its time series nature, the semantic relationship between different types of observations, and information in the sparsity structure of the data. Self-supervised Transformers have shown outstanding performance in a variety of structured tasks in NLP and computer vision. But multivariate time series data contains structured relationships over two dimensions: time and recorded event type, and straightforward applications of Transformers to time series data do not leverage this distinct structure. The quadratic scaling of self-attention layers can also significantly limit the input sequence length without appropriate input engineering. We introduce the DuETT architecture, an extension of Transformers designed to attend over both time and event type dimensions, yielding robust representations from EHR data. DuETT uses an aggregated input where sparse time series are transformed into a regular sequence with fixed length; this lowers the computational complexity relative to previous EHR Transformer models and, more importantly, enables the use of larger and deeper neural networks. When trained with self-supervised prediction tasks, that provide rich and informative signals for model pre-training, our model outperforms state-of-the-art deep learning models on multiple downstream tasks from the MIMIC-IV and PhysioNet-2012 EHR datasets.

Nature Communications

Nature Communications | Decentralized federated learning through proxy model sharing

Abstract

Institutions in highly regulated domains such as finance and healthcare often have restrictive rules around data sharing. Federated learning is a distributed learning framework that enables multi-institutional collaborations on decentralized data with improved protection for each collaborator’s data privacy. In this paper, we propose a communication-efficient scheme for decentralized federated learning called ProxyFL, or proxy-based federated learning. Each participant in ProxyFL maintains two models, a private model, and a publicly shared proxy model designed to protect the participant’s privacy. Proxy models allow efficient information exchange among participants without the need of a centralized server. The proposed method eliminates a significant limitation of canonical federated learning by allowing model heterogeneity; each participant can have a private model with any architecture. Furthermore, our protocol for communication by proxy leads to stronger privacy guarantees using differential privacy analysis. Experiments on popular image datasets, and a cancer diagnostic problem using high-quality gigapixel histology whole slide images, show that ProxyFL can outperform existing alternatives with much less communication overhead and stronger privacy.

International Conference on Machine Learning

ICML 2023 | TR0N: Translator Networks for 0-Shot Plug-and-Play Conditional Generation

Abstract

We propose TR0N, a highly general framework to turn pre-trained unconditional generative models, such as GANs and VAEs, into conditional models. The conditioning can be highly arbitrary, and requires only a pre-trained auxiliary model. For example, we show how to turn unconditional models into class-conditional ones with the help of a classifier, and also into text-to-image models by leveraging CLIP. TR0N learns a lightweight stochastic mapping which “translates” between the space of conditions and the latent space of the generative model, in such a way that the generated latent corresponds to a data sample satisfying the desired condition. The translated latent samples are then further improved upon through Langevin dynamics, enabling us to obtain higher-quality data samples. TR0N requires no training data nor fine-tuning, yet can achieve a zero-shot FID of 10.9 on MS-COCO, outperforming competing alternatives not only on this metric, but also in sampling speed — all while retaining a much higher level of generality.

International Conference on Learning Representations

ICLR 2023 Spotlight | Disparate Impact in Differential Privacy from Gradient Misalignment

Abstract

As machine learning becomes more widespread throughout society, aspects including data privacy and fairness must be carefully considered, and are crucial for deployment in highly regulated industries. Unfortunately, the application of privacy enhancing technologies can worsen unfair tendencies in models. In particular, one of the most widely used techniques for private model training, differentially private stochastic gradient descent (DPSGD), frequently intensifies disparate impact on groups within data. In this work we study the fine-grained causes of unfairness in DPSGD and identify gradient misalignment due to inequitable gradient clipping as the most significant source. This observation leads us to a new method for reducing unfairness by preventing gradient misalignment in DPSGD.

International Conference on Learning Representations

ICLR 2023 | Verifying the Union of Manifolds Hypothesis for Image Data

Abstract

Deep learning has had tremendous success at learning low-dimensional representations of high-dimensional data. This success would be impossible if there was no hidden low-dimensional structure in data of interest; this existence is posited by the manifold hypothesis, which states that the data lies on an unknown manifold of low intrinsic dimension. In this paper, we argue that this hypothesis does not properly capture the low-dimensional structure typically present in image data. Assuming that data lies on a single manifold implies intrinsic dimension is identical across the entire data space, and does not allow for subregions of this space to have a different number of factors of variation. To address this deficiency, we consider the union of manifolds hypothesis, which states that data lies on a disjoint union of manifolds of varying intrinsic dimensions. We empirically verify this hypothesis on commonly-used image datasets, finding that indeed, observed data lies on a disconnected set and that intrinsic dimension is not constant. We also provide insights into the implications of the union of manifolds hypothesis in deep learning, both supervised and unsupervised, showing that designing models with an inductive bias for this structure improves performance across classification and generative modelling tasks. Our code is available at https://github.com/layer6ai-labs/UoMH.

International Conference on Learning Representations

ICLR 2023 | Temporal Dependencies in Feature Importance for Time Series Prediction

Abstract

Time series data introduces two key challenges for explainability methods: firstly, observations of the same feature over subsequent time steps are not independent, and secondly, the same feature can have varying importance to model predictions over time. In this paper, we propose Windowed Feature Importance in Time (WinIT), a feature removal based explainability approach to address these issues. Unlike existing feature removal explanation methods, WinIT explicitly accounts for the temporal dependence between different observations of the same feature in the construction of its importance score. Furthermore, WinIT captures the varying importance of a feature over time, by summarizing its importance over a window of past time steps. We conduct an extensive empirical study on synthetic and real-world data, compare against a wide range of leading explainability methods, and explore the impact of various evaluation strategies. Our results show that WinIT achieves significant gains over existing methods, with more consistent performance across different evaluation metrics.

ACM Conference on Recommender Systems

RecSys Challenge 2022 2nd Place | Robust User Engagement Modeling with Transformers and Self-Supervision

Abstract

Large item catalogs and constantly changing preference trends make recommendations a critically important component of every fashion e-commerce platform. However, since most users browse anonymously, historical preference data is rarely available and recommendations have to be made using only information from within the session. In the 2022 ACM RecSys challenge, Dressipi released a dataset with 1.1 million online retail sessions in the fashion domain that span an 18-month period. The goal is to predict the item purchased at the end of each session. To simulate a common production scenario all sessions are anonymous and no previous user preference information is available. In this paper, we present our approach to this challenge. We leverage the Transformer architecture with two different learning objectives inspired by the self-supervised learning techniques to improve generalization. Our

team, LAYER 6, achieves strong results placing 2nd on the final leaderboard out of over 300 teams.

International Conference on Machine Learning

ICML 2022 | Bayesian Nonparametrics for Offline Skill Discovery

Abstract

Skills or low-level policies in reinforcement learning are temporally extended actions that can speed up learning and enable complex behaviours. Recent work in offline reinforcement learning and imitation learning has proposed several techniques for skill discovery from a set of expert trajectories. While these methods are promising, the number K of skills to discover is always a fixed hyperparameter, which requires either prior knowledge about the environment or an additional parameter search to tune it. We first propose a method for offline learning of options (a particular skill framework) exploiting advances in variational inference and continuous relaxations. We then highlight an unexplored connection between Bayesian nonparametrics and offline skill discovery, and show how to obtain a nonparametric version of our model. This version is tractable thanks to a carefully structured approximate posterior with a dynamically-changing number of options, removing the need to specify K. We also show how our nonparametric extension can be applied in other skill frameworks, and empirically demonstrate that our method can outperform state-of-the-art offline skill learning algorithms across a variety of environments.

Transactions on Machine Learning Research

TMLR 2022 | Diagnosing and Fixing Manifold Overfitting in Deep Generative Models

Abstract

Likelihood-based, or explicit, deep generative models use neural networks to construct flexible high-dimensional densities. This formulation directly contradicts the manifold hypothesis, which states that observed data lies on a low-dimensional manifold embedded in high-dimensional ambient space. In this paper we investigate the pathologies of maximum-likelihood training in the presence of this dimensionality mismatch. We formally prove that degenerate optima are achieved wherein the manifold itself is learned but not the distribution on it, a phenomenon we call manifold overfitting. We propose a class of two-step procedures consisting of a dimensionality reduction step followed by maximum-likelihood density estimation, and prove that they recover the data-generating distribution in the nonparametric regime, thus avoiding manifold overfitting. We also show that these procedures enable density estimation on the manifolds learned by implicit models, such as generative adversarial networks, hence addressing a major shortcoming of these models. Several recently proposed methods are instances of our two-step procedures; we thus unify, extend, and theoretically justify a large class of models.

Computer Vision and Pattern Recognition Conference

CVPR 2022 | X-Pool: Cross-Modal Language-Video Attention for Text-Video Retrieval

Abstract

In text-video retrieval, the objective is to learn a crossmodal similarity function between a text and a video that ranks relevant text-video pairs higher than irrelevant pairs. However, videos inherently express a much wider gamut of information than texts. Instead, texts often capture subregions of entire videos and are most semantically similar to certain frames within videos. Therefore, for a given text, a retrieval model should focus on the text’s most semantically similar video sub-regions to make a more relevant comparison. Yet, most existing works aggregate entire videos without directly considering text. Common text-agnostic aggregations schemes include mean-pooling or self-attention over the frames, but these are likely to encode misleading visual information not described in the given text. To address this, we propose a cross-modal attention model called XPool that reasons between a text and the frames of a video. Our core mechanism is a scaled dot product attention for a text to attend to its most semantically similar frames. We then generate an aggregated video representation conditioned on the text’s attention weights over the frames. We evaluate our method on three benchmark datasets of MSRVTT, MSVD and LSMDC, achieving new state-of-the-art results by up to 12% in relative improvement in Recall@1. Our findings thereby highlight the importance of joint textvideo reasoning to extract important visual cues according to text. Full code and demo can be found at: layer6ailabs.github.io/xpool/.

Nature Scientific Reports

Nature Scientific Reports | Federated learning and differential privacy for medical image analysis

Abstract

The artificial intelligence revolution has been spurred forward by the availability of large-scale datasets. In contrast, the paucity of large-scale medical datasets hinders the application of machine learning in healthcare. The lack of publicly available multi-centric and diverse datasets mainly stems from confidentiality and privacy concerns around sharing medical data. To demonstrate a feasible path forward in medical image imaging, we conduct a case study of applying a differentially private federated learning framework for analysis of histopathology images, the largest and perhaps most complex medical images. We study the effects of IID and non-IID distributions along with the number of healthcare providers, i.e., hospitals and clinics, and the individual dataset sizes, using The Cancer Genome Atlas (TCGA) dataset, a public repository, to simulate a distributed environment. We empirically compare the performance of private, distributed training to conventional training and demonstrate that distributed training can achieve similar performance with strong privacy guarantees. We also study the effect of different source domains for histopathology images by evaluating the performance using external validation. Our work indicates that differentially private federated learning is a viable and reliable framework for the collaborative development of machine learning models in medical image analysis.

ACM Conference on Recommender Systems

RecSys 2021 | User Engagement Modeling with Deep Learning and Language Models

Abstract

Twitter is one of the main information sharing platforms in the world with millions of tweets created daily. To ensure that users get relevant content in their feeds Twitter extensively leverages machine learning-based recommender systems. However, given the large volume of data, all production systems must be both memory and CPU efficient. In the 2021 ACM RecSys challenge Twitter simulates the production environment with a large dataset of almost 1 bilion user-tweet engagements that span a 4 week period. The goal is to accurately predict engagement type, and all models are subject to strict run-time constraints during inference. In this paper we present our approach to the 2021 ACM Recsys challenge. We use a hybrid pipeline and leverage gradient boosting, neural network classifiers and multi-lingual language models to maximize performance. Our approach achieves strong results placing 3’rd on the public leaderboard. We further explore the complexity of language model inference, and show that through distillation it can be possible to run such models in highly constrained production environments.

International Conference on Computer Vision

ICCV 2021 | Context-aware Scene Graph Generation with Seq2Seq Transformers

Abstract

Scene graph generation is an important task in computer vision aimed at improving the semantic understanding of the visual world. In this task, the model needs to detect objects and predict visual relationships between them. Most of the existing models predict relationships in parallel assuming their independence. While there are different ways to capture these dependencies, we explore a conditional approach motivated by the sequence-to-sequence (Seq2Seq) formalism. Different from the previous research, our proposed model predicts visual relationships one at a time in an autoregressive manner by explicitly conditioning on the already predicted relationships. Drawing from translation models in NLP, we propose an encoder-decoder model built using Transformers where the encoder captures global context and long range interactions. The decoder then makes sequential predictions by conditioning on the scene graph constructed so far. In addition, we introduce a novel reinforcement learning-based training strategy tailored to Seq2Seq scene graph generation. By using a self-critical policy gradient training approach with Monte Carlo search we directly optimize for the (mean) recall metrics and bridge the gap between training and evaluation. Experimental results on two public benchmark datasets demonstrate that our Seq2Seq learning approach achieves strong empirical performance, outperforming previous state-of-the-art, while remaining efficient in terms of training and inference time.

Neural Information Processing Systems Conference

NeurIPS 2021 | Rectangular Flows for Manifold Learning

Abstract

Normalizing flows are invertible neural networks with tractable change-of-volume terms, which allow optimization of their parameters to be efficiently performed via maximum likelihood. However, data of interest are typically assumed to live in some (often unknown) low-dimensional manifold embedded in a high-dimensional ambient space. The result is a modelling mismatch since — by construction — the invertibility requirement implies high-dimensional support of the learned distribution. Injective flows, mappings from low- to high-dimensional spaces, aim to fix this discrepancy by learning distributions on manifolds, but the resulting volume-change term becomes more challenging to evaluate. Current approaches either avoid computing this term entirely using various heuristics, or assume the manifold is known beforehand and therefore are not widely applicable. Instead, we propose two methods to tractably calculate the gradient of this term with respect to the parameters of the model, relying on careful use of automatic differentiation and techniques from numerical linear algebra. Both approaches perform end-to-end nonlinear manifold learning and density estimation for data projected onto this manifold. We study the trade-offs between our proposed methods, empirically verify that we outperform approaches ignoring the volume-change term by more accurately learning manifolds and the corresponding distributions on them, and show promising results on out-of-distribution detection. Our code is available at https://github.com/layer6ai-labs/rectangular-flows.

Neural Information Processing Systems Conference

NeurIPS 2021 | Tractable Density Estimation on Learned Manifolds with Conformal Embedding Flows

Abstract

Normalizing flows are generative models that provide tractable density estimation via an invertible transformation from a simple base distribution to a complex target distribution. However, this technique cannot directly model data supported on an unknown low-dimensional manifold, a common occurrence in real-world domains such as image data. Recent attempts to remedy this limitation have introduced geometric complications that defeat a central benefit of normalizing flows: exact density estimation. We recover this benefit with Conformal Embedding Flows, a framework for designing flows that learn manifolds with tractable densities. We argue that composing a standard flow with a trainable conformal embedding is the most natural way to model manifold-supported data. To this end, we present a series of conformal building blocks and apply them in experiments with synthetic and real-world data to demonstrate that flows can model manifold-supported distributions without sacrificing tractable likelihoods.

Computer Vision and Pattern Recognition Conference

CVPR 2021 | Weakly Supervised Action Selection Learning in Video

Abstract

Localizing actions in video is a core task in computer vision. The weakly supervised temporal localization problem investigates whether this task can be adequately solved with only video-level labels, significantly reducing the amount of expensive and error-prone annotation that is required. A common approach is to train a frame-level classifier where frames with the highest class probability are selected to make a video-level prediction. Frame-level activations are then used for localization. However, the absence of frame-level annotations cause the classifier to impart class bias on every frame. To address this, we propose the Action Selection Learning (ASL) approach to capture the general concept of action, a property we refer to as “actionness”. Under ASL, the model is trained with a novel class-agnostic task to predict which frames will be selected by the classifier. Empirically, we show that ASL outperforms leading baselines on two popular benchmarks THUMOS-14 and ActivityNet-1.2, with 10.3% and 5.7% relative improvement respectively. We further analyze the properties of ASL and demonstrate the importance of actionness.

International Conference on Learning Representations

ICLR 2021 | C-Learning: Horizon-Aware Cumulative Accessibility Estimation

Abstract

Multi-goal reaching is an important problem in reinforcement learning needed to achieve algorithmic generalization. Despite recent advances in this field, current algorithms suffer from three major challenges: high sample complexity, learning only a single way of reaching the goals, and difficulties in solving complex motion planning tasks. In order to address these limitations, we introduce the concept of cumulative accessibility functions, which measure the reachability of a goal from a given state within a specified horizon. We show that these functions obey a recurrence relation, which enables learning from offline interactions. We also prove that optimal cumulative accessibility functions are monotonic in the planning horizon. Additionally, our method can trade off speed and reliability in goal-reaching by suggesting multiple paths to a single goal depending on the provided horizon. We evaluate our approach on a set of multi-goal discrete and continuous control tasks. We show that our method outperforms state-of-the-art goal-reaching algorithms in success rate, sample complexity, and path optimality. Our code is available at this https URL, and additional visualizations can be found at this https URL.

The Web Conference

The Web Conference 2021 | HGCF: Hyperbolic Graph Convolution Networks for Collaborative Filtering

Abstract

Hyperbolic spaces offer a rich setup to learn embeddings with superior properties that have been leveraged in areas such as computer vision, natural language processing and computational biology. Recently, several hyperbolic approaches have been proposed to learn robust representations for users and items in the recommendation setting. However, these approaches don’t capture the higher order relationships that typically exist in the recommendation domain. Graph convolutional neural networks (GCNs) on the other hand excel atcapturing higher order information by applying multiple levels of aggregation to local representations. In this paper we combine these frameworks in a novel way, by proposing a hyperbolic GCN model for collaborative filtering. We demonstrate that our model can be effectively learned with a margin ranking loss, and show that hyperbolic space has desirable properties under the rank margin setting. At test time, inference in our model is done using the hyperbolic distance which preserves the structure of the learned space. We conduct extensive empirical analysis on three public benchmarks and compare against a large set of baselines. Our approach achieves highly competitive results and outperforms leading baselines including the Euclidean GCN counterpart. We further study the properties of the learned hyperbolic embeddings and show that they offer meaningful insights into the data.

npj | digital medicine

Predicting adverse outcomes due to diabetes complications with machine learning using administrative health data

Abstract

Across jurisdictions, government and health insurance providers hold a large amount of data from patient interactions with the healthcare system. We aimed to develop a machine learning-based model for predicting adverse outcomes due to diabetes complications using administrative health data from the single-payer health system in Ontario, Canada. A Gradient Boosting Decision Tree model was trained on data from 1,029,366 patients, validated on 272,864 patients, and tested on 265,406 patients. Discrimination was assessed using the AUC statistic and calibration was assessed visually using calibration plots overall and across population subgroups. Our model predicting three-year risk of adverse outcomes due to diabetes complications (hyper/hypoglycemia, tissue infection, retinopathy, cardiovascular events, amputation) included 700 features from multiple diverse data sources and had strong discrimination (average test AUC = 77.7, range 77.7–77.9). Through the design and validation of a high-performance model to predict diabetes complications adverse outcomes at the population level, we demonstrate the potential of machine learning and administrative health data to inform health planning and healthcare resource allocation for diabetes management.

CMAJ Open

Characterizing early Canadian federal, provincial, territorial and municipal nonpharmaceutical interventions in response to COVID-19: a descriptive analysis

Abstract

Nonpharmaceutical interventions (NPIs) are the primary tools to mitigate early spread of the coronavirus disease 2019 (COVID-19) pandemic; however, such policies are implemented variably at the federal, provincial or territorial, and municipal levels without centralized documentation. We describe the development of the comprehensive open Canadian Non-Pharmaceutical Intervention (CAN-NPI) data set, which identifies and classifies all NPIs implemented in regions across Canada in response to COVID-19, and provides an accompanying description of geographic and temporal heterogeneity.

COVID-19 Publication to Nature Scientific Reports

Evolutionary and structural analyses of SARS-CoV-2 D614G spike protein mutation now documented worldwide

Abstract

Evolutionary and structural analyses of SARS-CoV-2 D614G spike protein mutation now documented worldwide

The COVID-19 pandemic, caused by the Severe Acute Respiratory Syndrome Coronavirus 2 (SARS-CoV-2), was declared on March 11, 2020 by the World Health Organization. As of the 31st of May, 2020, there have been more than 6 million COVID-19 cases diagnosed worldwide and over 370,000 deaths, according to Johns Hopkins. Thousands of SARS-CoV-2 strains have been sequenced to date, providing a valuable opportunity to investigate the evolution of the virus on a global scale. We performed a phylogenetic analysis of over 1,225 SARS-CoV-2 genomes spanning from late December 2019 to mid-March 2020. We identified a missense mutation, D614G, in the spike protein of SARS-CoV-2, which has emerged as a predominant clade in Europe (954 of 1,449 (66%) sequences) and is spreading worldwide (1,237 of 2,795 (44%) sequences). Molecular dating analysis estimated the emergence of this clade around mid-to-late January (10–25 January) 2020. We also applied structural bioinformatics to assess the potential impact of D614G on the virulence and epidemiology of SARS-CoV-2. In silico analyses on the spike protein structure suggests that the mutation is most likely neutral to protein function as it relates to its interaction with the human ACE2 receptor. The lack of clinical metadata available prevented our investigation of association between viral clade and disease severity phenotype. Future work that can leverage clinical outcome data with both viral and human genomic diversity is needed to monitor the pandemic.

The ACM Conference on Recommender System

TAFA: Two-headed Attention Fused Autoencoder for Context-Aware Recommendations

Abstract

TAFA: Two-headed Attention Fused Autoencoder for Context-Aware Recommendations

Collaborative filtering with implicit feedback is a ubiquitous class of recommendation problems where only positive interactions such as purchases or clicks are observed. Autoencoder-based recommendation models have shown strong performance on many implicit feedback benchmarks. However, these models tend to suffer from popularity bias making recommendations less personalized. User-generated reviews contain a rich source of preference information, often with specific details that are important to each user, and can help mitigate the popularity bias. Since not all reviews are equally useful, existing work has been exploring various forms of attention to distill relevant information. In the majority of proposed approaches, representations from implicit feedback and review branches are simply concatenated at the end to generate predictions. This can prevent the model from learning deeper correlations between the two modalities and affect prediction accuracy. To address these problems, we propose a novel Two-headed Attention Fused Autoencoder (TAFA) model that jointly learns representations from user reviews and implicit feedback to make recommendations. We apply early and late modality fusion which allows the model to fully correlate and extract relevant information from both input sources. To further combat popularity bias, we leverage the Noise Contrastive Estimation (NCE) objective to “de-popularize” the fused user representation via a two-headed decoder architecture. Empirically, we show that TAFA outperforms leading baselines on multiple real-world benchmarks. Moreover, by tracing attention weights back to reviews we can provide explanations for the generated recommendations and gain further insights into user preferences.

ACM RecSys Challenge 2020

2nd Place

Predicting Twitter Engagement With Deep Language Models

Abstract

ACM RecSys Challenge 2020

Twitter has become one of the main information sharing platforms for millions of users world-wide. Numerous tweets are created daily, many with highly time sensitive content such as breaking news,

new multimedia content or personal updates. Consequently, accurately recommending relevant tweets to users in a timely manner is a highly important and challenging problem. The 2020 ACM RecSys

Challenge is aimed at benchmarking leading recommendation models for this task. The challenge is based on a large and recent dataset of over 200M tweet engagements released by Twitter with content in over 50 languages. In this work we present our approach where we leverage recent advances in deep language modeling and attention architectures, to combine information from extracted features, user engagement history and target tweet content. We first fine tune leading multilingual language models M-BERT and XLM-R for Twitter data. Embeddings from these models are used to extract tweet and user history representations. We then combine all components together and jointly train them to maximize engagement prediction accuracy. Our approach achieves highly competitive performance placing 2’nd on the final private leaderboard.

International Conference on Machine Learning (ICML 2020)

Improving Transformer Optimization Through Better Initialization

Abstract

The Transformer architecture has achieved considerable success recently; the key component of the Transformer is the attention layer that enables the model to focus on important regions within an input sequence. Gradient optimization with attention layers can be notoriously difficult requiring tricks such as learning rate warmup to prevent divergence. As Transformer models are becoming larger and more expensive to train, recent research has focused on understanding and improving optimization in these architectures. In

this work our contributions are two-fold: we first investigate and empirically validate the source of optimization problems in the encoder-decoder Transformer architecture; we then propose a new weight initialization scheme with theoretical justification, that enables training without warmup or layer normalization. Empirical results on public machine translation benchmarks show that our approach achieves leading accuracy, allowing to train deep Transformer models with 200 layers in both encoder and decoder (over 1000 attention/MLP blocks) without difficulty.

Conference on Neural Information Processing Systems (NeurIPS 2019)

Guided Similarity Separation for Image Retrieval (Oral)

Abstract

Despite recent progress in computer vision, image retrieval remains a challenging open problem. Numerous variations such as view angle, lighting and occlusion make it difficult to design models that are both robust and efficient. Many leading methods traverse the nearest neighbor graph to exploit higher order neighbor information and uncover the highly complex underlying manifold. In this work we propose a different approach where we leverage graph convolutional networks to directly encode neighbor information into image descriptors. We further leverage ideas from clustering and manifold learning, and introduce an unsupervised loss based on pairwise separation of image similarities. Empirically, we demonstrate that our model is able to successfully learn a new descriptor space that significantly improves retrieval accuracy, while still allowing efficient inner product inference. Experiments on five public benchmarks show highly competitive performance with up to 24% relative improvement in mAP over leading baselines.

YouTube-8M Video Understanding Challenge

1st Place

Cross-Class Relevance Learning for Temporal Concept Localization

Abstract

We present a novel Cross-Class Relevance Learning approach for the task of temporal concept localization. Most localization architectures rely on feature extraction layers followed by a classification layer which outputs class probabilities for each segment. However, in many real-world applications classes can exhibit complex relationships that are difficult to model with this architecture. In contrast, we propose to incorporate target class and class-related features as input, and learn a pairwise binary model to predict general segment to class relevance. This facilitates learning of shared information between classes, and allows for arbitrary class-specific feature engineering. We apply this approach to the 3rd YouTube-8M Video Understanding Challenge together with other leading models, and achieve first place out of over 280 teams. In this paper we describe our approach and show some empirical results.

Open Images 2019 Visual Relationship Challenge

1st Place

Learning Effective Visual Relationship Detector on 1 GPU

Abstract

We present our winning solution to the Open Images 2019 Visual Relationship challenge. This is the largest challenge of its kind to date with nearly 9 million training images. Challenge task consists of detecting objects and identifying relationships between them in complex scenes. Our solution has three stages, first object detection model is finetuned for the challenge classes using a novel weight transfer approach. Then, spatio-semantic and visual relationship models are trained on candidate object pairs. Finally, features and model predictions are combined to generate the final relationship prediction. Throughout the challenge we focused on minimizing the hardware requirements of our architecture. Specifically, our weight transfer approach enables much faster optimization, allowing the entire architecture to be trained on a single GPU in under two days. In addition to efficient optimization, our approach also achieves superior accuracy winning first place out of over 200 teams,

and outperforming the second place team by over 5% on the held-out private leaderboard.

ACM RecSys Challenge 2019

2nd Place

Robust Contextual Models for In-Session Personalization

Abstract

Most online activity happens in the context of a session; to enable better user experience many online platforms aim to dynamically refine their recommendations as sessions progress. A popular approach is to continuously re-rank recommendations based on current session activity and past session logs. This motivates the 2019 ACM RecSys Challenge organised by Trivago. Using the session log dataset released by Trivago, the challenge aims to benchmark models for in-session re-ranking of hotel recommendations. In this paper we present our approach to this challenge where we first contextualize sessions in a global and local manner, and then train gradient boosting and deep learning models for re-ranking. Our team achieved 2nd place out of over 570 teams, with less than 0.3% relative difference in Mean Reciprocal Rank from the 1st place team.

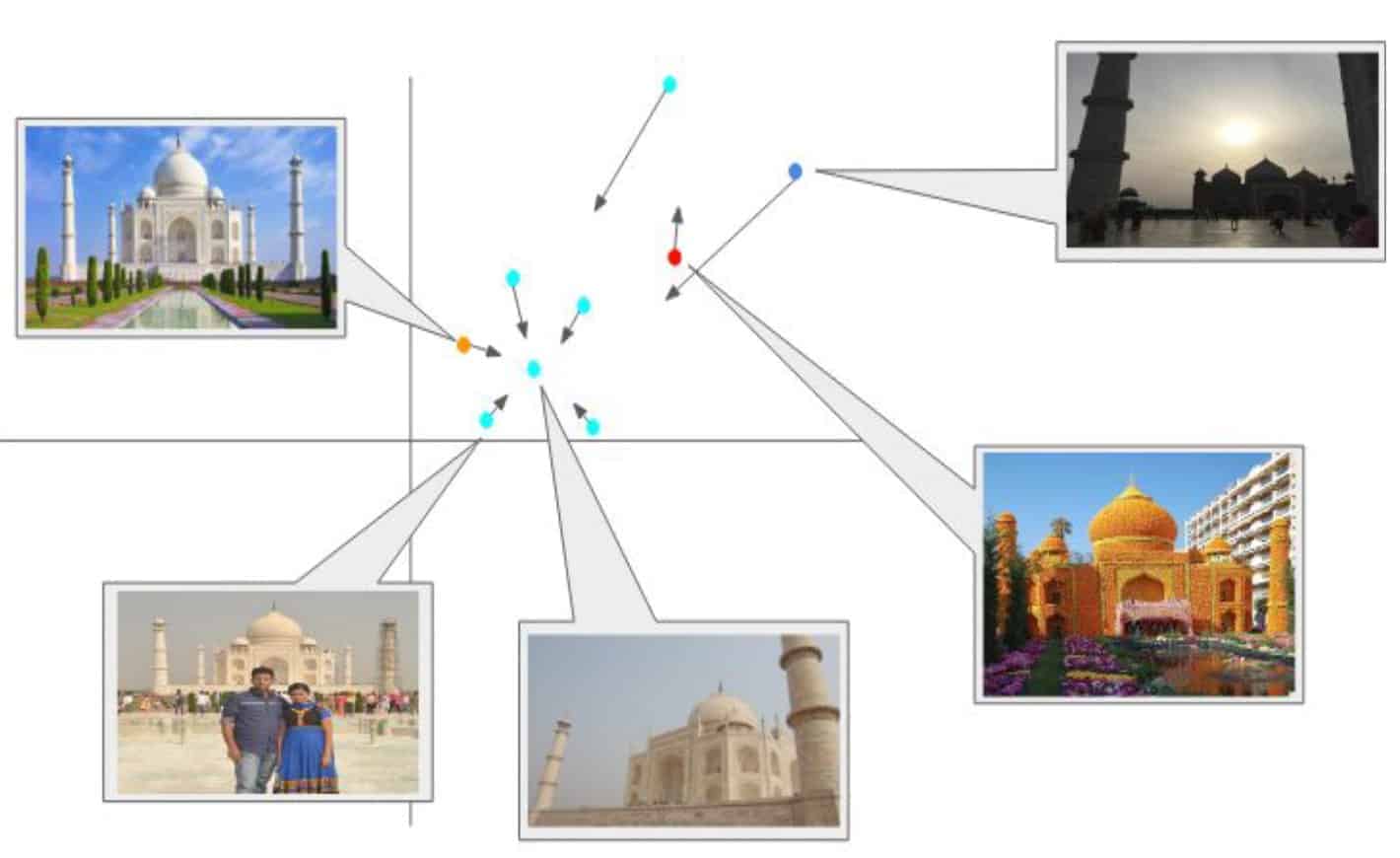

Conference on Computer Vision and Pattern Recognition (CVPR 2019)

Explore-Exploit Graph Traversal for Image Retrieval

Abstract

In this paper, we propose a novel graph-based approach for image retrieval.

We propose a novel graph-based approach for image retrieval. Given a nearest neighbor graph produced by the global descriptor model, we traverse it by alternating between exploit and explore steps. The exploit step maximally utilizes the immediate neighborhood of each vertex, while the explore step traverses vertices that are farther away in the descriptor space. By combining these two steps we can better capture the underlying image manifold, and successfully retrieve relevant images that are visually dissimilar to the query. Our traversal algorithm is conceptually simple, has few tunable parameters and can be implemented with basic data structures. This enables fast real-time inference for previously unseen queries with minimal memory overhead. Despite relative simplicity, we show highly competitive results on multiple public benchmarks, including the largest image retrieval dataset that is currently publicly available.

Google Landmark Retrieval Challenge 2019

3rd Place

Semi-Supervised Exploration in Image Retrieval

Abstract

Semi-Supervised Exploration in Image Retrieval

We present our solution to Landmark Image Retrieval Challenge 2019. This challenge was based on the large Google Landmarks Dataset V2. The goal was to retrieve all database images containing the same landmark for every provided query image. Our solution is a combination of global and local models to form an initial KNN graph. We then use a novel extension of the recently proposed graph traversal method EGT referred to as semi-supervised EGT to refine the graph and retrieve better candidates.

➢DIR concatenated with GeM trained on Landmark-v1 dataset.

➢QE and spatial verification (RANSAC) using DELF-V2

➢Novel Contribution: Semi-supervised extension of EGT for final ranking.

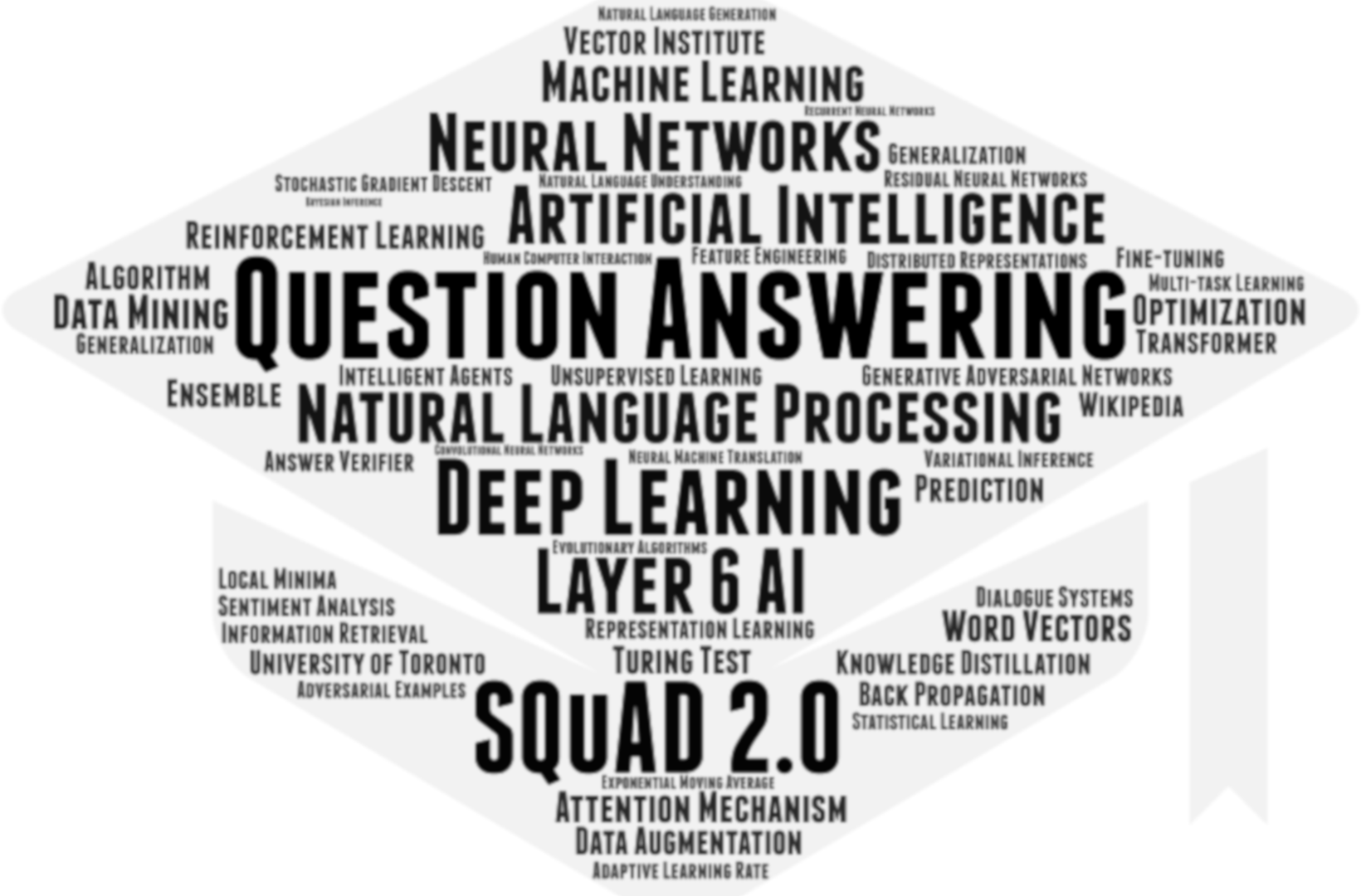

Stanford Question Answering Dataset 2.0

2nd Place

Top-performing Natural Language Processing Model

Abstract

Released in 2016, the Stanford Question Answering Dataset (SQuAD) is a reading comprehension dataset consisting of questions posed by crowdworkers on a set of Wikipedia articles. The answer to every question is a segment of text, or span, from the corresponding reading passage, or the question might be unanswerable. Performance on SQuAD surpassed human performance in 2018, and in response the SQuAD2.0 challenge was released, which combines the 100,000 questions in SQuAD1.1 with over 50,000 new, unanswerable questions written adversarially by crowdworkers to look similar to answerable ones. Solutions to SQuAD2.0 will not only answer questions when possible, but also determine when no answer is supported by the paragraph and abstain from answering. The Layer 6 NLP Team developed a model that ranked the 2nd place in the leadership board (as of March 28, 2019).

Spotify RecSys 2018

Winner

Two-stage Model for Automatic Playlist Continuation at Scale

Abstract

The paper presents a two-stage model to evaluate and advance current state-of-the-art in automated playlist continuation using a large scale dataset released by Spotify.

Automatic playlist continuation is a prominent problem in music recommendation. Significant portion of music consumption is now done online through playlists and playlist-like online radio stations. Manually compiling playlists for consumers is a highly time consuming task that is difficult to do at scale given the diversity of tastes and the large amount of musical content available. Consequently, automated playlist continuation has received increasing attention recently. The 2018 ACM RecSys Challenge is dedicated to evaluating and advancing current state-of-the-art in automated playlist continuation using a large scale dataset released by Spotify. In this paper we present our approach to this challenge.

We use a two-stage model where the first stage is optimized for fast retrieval, and the second stage re-ranks retrieved candidates maximizing the accuracy at the top of the recommended list. Our team vl6 achieved 1’st place in both main and creative tracks out of over 100 teams.

RSNA Pneumonia Detection Challenge 2018

4th Place

4th Place in the RSNA Pneumonia Detection Challenge | Kaggle

Abstract

Pneumonia accounts for over 15% of all deaths of children under 5 years old internationally. In 2015, 920,000 children under the age of 5 died from the disease. In this challenge, we were challenged to build an algorithm to detect a visual signal for pneumonia in medical images. Layer 6 collaborated with 16Bit and developed an ensemble of 15 state-of-the-art object detection models (10 Mask RCNN, 3 YOLOv3, and 2 Faster RCNN models), in combination with a classifier (DenseNet-121architecture pre-trained on NIH Chest X-rays data set) that served to reduce false positives, to detect pneumonia chest X-rays. We found that using a relaxed detection threshold for object detection, whilst requiring unanimous agreement among the detectors, effectively consolidated the need to minimize both false positives and false negatives. Adaptive histogram equalization was used to improve image contrast as a data preprocessing step. We used age, sex, and view position as inputs into the penultimate layer of the classifier to improve performance.

Google Landmark Retrieval Challenge 2018

2nd Place

Modified Maximum Spanning Tree Clustering for Large-Scale Image Retrieval

Abstract

Retrieve all the images depicting the same landmark regardless of visual similarity.

Put images with the same landmark closer to the approximated centers of the landmark clusters iteratively.

- Involve local descriptors and geometric verification.

- Test images can be used as additional bridging.

ACM RecSys Challenge 2017

Winner

Content-based Neighbor Models for Cold Start in Recommender Systems

Abstract